- Automate & Grow with A.I.

- Posts

- Model Wars! Assemble your Agents

Model Wars! Assemble your Agents

Not those models, these models. Anthropic (Claude) vs OpenAI vs the World

The Model Wars just went nuclear.

This week, Claude Opus 4.6 dropped from Anthropic (February 5, 2026), claiming the throne as the new frontier leader in key areas like

agentic coding,

long-context reasoning, and

economically valuable knowledge work.

It outperforms OpenAI's GPT-5.2 by 144 Elo points on GDPval-AA (a benchmark for real-world professional tasks in finance, legal, and more), while leading on Terminal-Bench 2.0 for agentic coding and setting new highs on multidisciplinary reasoning tests like Humanity’s Last Exam.

Grok, please explain this to me like I am in 4th grade

Okay, imagine we're talking about super-smart computer brains (called AI models) that try to do hard grown-up jobs really well.

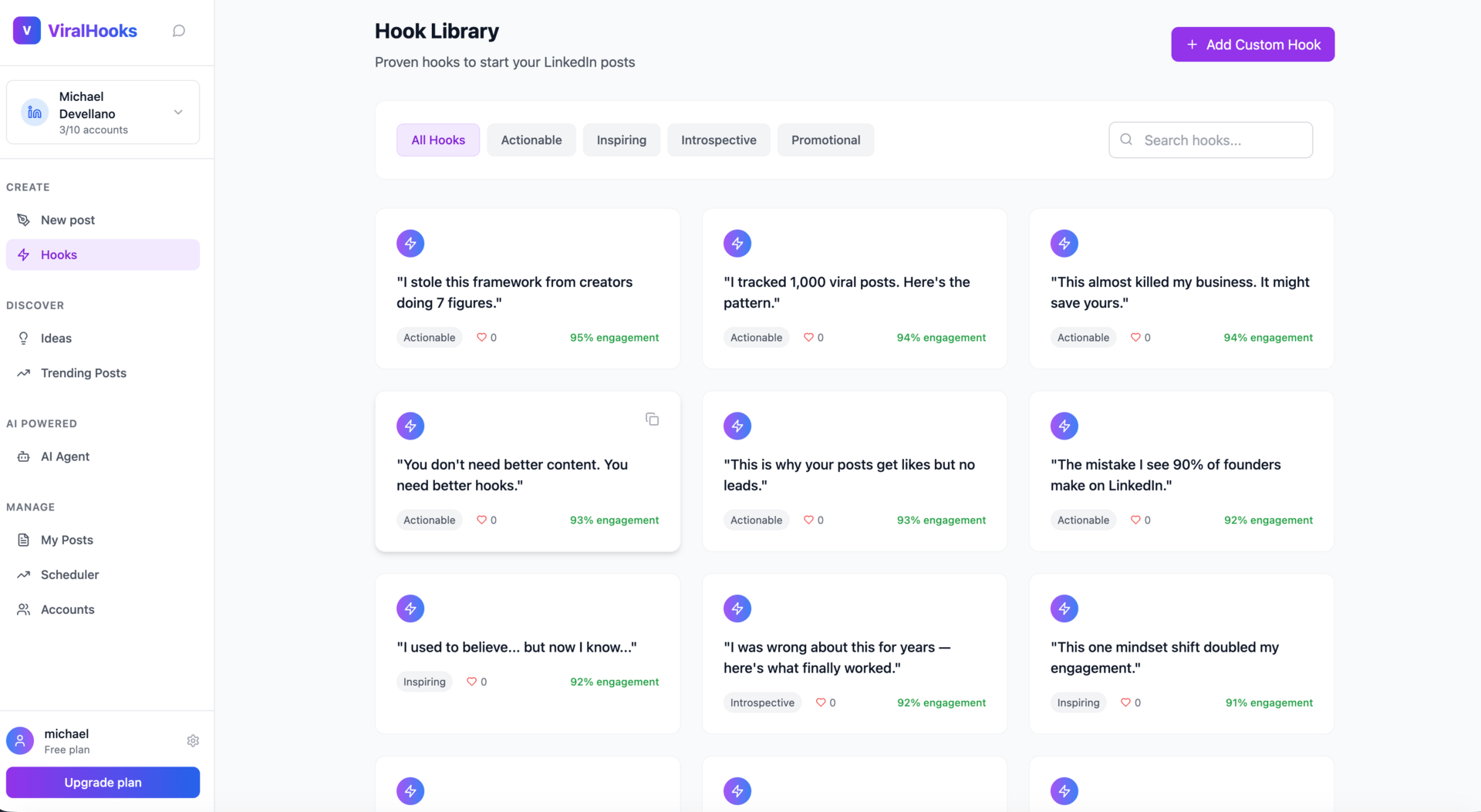

Do you post to LinkedIn? Why not try our AI Agent feature before we launch for $0

Viralhooks.work is a LinkedIn AI Agent that helps mange your content. You can generate content

based upon tone, style of post and category

setup AI Agents based upon topics that produce reviewable content

start LinkedIn posts from a Hooks Library

schedule to one or more LinkedIn connected content calendars

plus you can save, generate and organize post ideas by topic in the Ideas folders

Sign up to the wait list and then create your account to be a beta user and get lifetime $17/mo pricing after beta and 3 months free (again post Beta)

Ok back to our regular AI Programming…

First part: "It outperforms OpenAI's GPT-5.2 by 144 Elo points on GDPval-AA"

Think of Elo points like scores in a big video game tournament or chess matches. Higher number = better player.

If one kid has 1000 points and another has 1144 points, the one with more points usually wins when they play each other.

Here, Claude got 144 more Elo points than GPT-5.2 on a special test. That means Claude wins way more often (like beating the other kid in almost 7 out of 10 games!).

What test? GDPval-AA is like a pretend job test for real work grown-ups do to make money (like money math for banks, writing important papers for lawyers, or other office jobs that help the country make stuff and earn dollars). It's checking if the AI can do those jobs as good as (or better than) real people who get paid for them.

Second part: "while leading on Terminal-Bench 2.0 for agentic coding"

This is another test called Terminal-Bench 2.0.

Imagine a computer "agent" (like a helpful robot helper) that has to type commands in a black screen (called a terminal) to fix problems, build programs, set up games, or do techy jobs all by itself — no help from a person.

Claude is the best at this right now. It can solve more of these tricky coding adventures than the other AIs.

Third part: "and setting new highs on multidisciplinary reasoning tests like Humanity’s Last Exam."

Humanity’s Last Exam is a super-duper hard quiz made by lots of smart teachers and experts.

It has thousands of really tough questions about math, science, history, art — everything! It's like the hardest school test ever invented to see if an AI can think and reason like a top expert human.

Claude got the highest score anyone has seen so far on this test. It means Claude is really good at figuring out hard, mixed-up brain puzzles from all different subjects.

So in kid terms:

Claude Opus 4.6 is like the new champion in a bunch of big AI contests. It beats the old champ (GPT-5.2) by a lot in pretend grown-up jobs, in doing computer commands by itself, and in super-hard thinking tests.

Claude is winning the "smartest computer brain" games right now! 🚀

But benchmarks are table stakes now. Here's what actually matters:

Anthropic didn't just release a model. They demonstrated recursive self-improvement in action.

Using Opus 4.6 with their new "agent teams" feature (16 parallel Claude instances collaborating autonomously on a shared Git repo), they built a complete Rust-based C compiler from scratch.

Capable of compiling Linux kernel 6.9 across x86, ARM, and RISC-V architectures.

Also successfully built major projects like PostgreSQL, SQLite, Redis, FFmpeg, QEMU—and yes, even ran Doom.

The whole experiment: ~2,000 Claude Code sessions over two weeks, consuming billions of tokens at a total cost of just under $20,000.

That's a task historically requiring person-decades of expert engineering effort, now achieved for roughly the price of a used car.

The compiler isn't perfect (some limitations like relying on GCC for certain low-level bits, less efficient output code), but it's a stunning proof-of-concept: an AI system autonomously rewriting foundational parts of the software stack beneath it.

This isn't incremental progress. It's the early stages of recursive self-improvement at production scale—the singularity isn't distant hype; components are shipping today.

OpenAI didn't sit idle.

Within minutes of Opus 4.6's launch (same day, February 5), they countered with GPT-5.3-Codex—their most capable agentic coding model yet.

Key details:

25% faster inference than GPT-5.2-Codex.

Tops benchmarks like 77.3% on Terminal-Bench 2.0 and strong SWE-Bench Pro scores.

Explicitly described as "instrumental in its own development": it helped debug training runs, scale GPU clusters, build eval tools, and more—the first publicly acknowledged case of a model substantially contributing to creating itself.

The leapfrogging cycle has compressed dramatically. We're no longer tracking AI progress in months or years. We're measuring it in minutes.

For builders and businesses automating/growing with AI:

Agent teams + million-token contexts (Opus 4.6's 1M beta) unlock entirely new classes of autonomous software dev.

Self-improving models like GPT-5.3-Codex accelerate iteration loops inside labs and in production tools.

Costs are plummeting while capability explodes—$20K for a cross-architecture compiler is a market-repricing signal for dev services, SaaS infra, and beyond.

The frontier is moving so fast that yesterday's SOTA is today's baseline.

If you're not experimenting with these models today (Claude Code, Cursor integrations, Codex app/CLI), the gap widens by the hour.